Reproducible Research: Principles and Practice

Introduction

This tutorial introduces reproducible research — the practices, tools, and norms that allow scientific findings to be independently verified, extended, and built upon. The ability to reproduce a study’s results is one of the foundational commitments of empirical science, yet surveys consistently show that a substantial proportion of published findings fail to survive independent scrutiny. This has come to be called the reproducibility crisis, and it has reshaped conversations about research practice across the natural sciences, social sciences, and increasingly the humanities and linguistics.

The tutorial covers the core conceptual vocabulary of reproducibility (reproduction, replication, robustness, triangulation, transparency), the different levels of reproducibility a study can achieve, and the practical strategies — from folder organisation and documentation to version control, computational notebooks, and pre-registration — that researchers can adopt to make their work more transparent and trustworthy. A dedicated section addresses reproducibility specifically in corpus linguistics and computational humanities, where the crisis has begun to receive sustained attention (Schweinberger 2026; Flanagan 2025; Sönning and Werner 2021).

By the end of this tutorial you will be able to:

- Define and distinguish the key concepts: reproduction, replication, robustness, triangulation, and transparency

- Explain what the reproducibility crisis is, what caused it, and how it has developed in the sciences and in linguistics

- Identify the four main reasons why research fails to reproduce — methodological, data, computational, and publication-related

- Describe the five levels of the reproducibility spectrum and choose an appropriate target level for a given study

- Implement a standard project folder structure, file-naming convention, README, codebook, and analysis log

- Use Git and GitHub for version control in a research project

- Create a computational notebook (R Markdown or Quarto) that integrates code, output, and narrative

- Manage a project’s computational environment using

renvandsessionInfo() - Share data and code via a repository (OSF, Zenodo, or institutional RDM) and obtain a persistent DOI

- Pre-register a study and explain how registered reports prevent publication bias

This tutorial assumes basic familiarity with R and with quantitative research methods. No prior knowledge of version control or open science practices is required. Readers may benefit from first completing:

Martin Schweinberger. 2026. Reproducible Research: Principles and Practice. The Language Technology and Data Analysis Laboratory (LADAL), The University of Queensland, Australia. url: https://ladal.edu.au/tutorials/repro/repro.html (Version 2026.03.28).

Why reproducibility matters

The reproducibility crisis

The reproducibility crisis is not a distant problem in other disciplines. A landmark survey of scientists across fields found that over 70% had tried and failed to reproduce another researcher’s experiments, and more than 50% had failed to reproduce their own (Baker 2016). A large-scale replication project in psychology successfully reproduced only 36–47% of published findings (Collaboration 2015). The economic costs have been estimated at $28 billion per year in the United States alone from irreproducible preclinical research (Freedman, Cockburn, and Simcoe 2015).

The consequences extend beyond wasted resources. When influential findings fail to replicate, public confidence in science erodes. Funding agencies and journals have responded by mandating greater transparency — requiring data and code sharing, pre-registration, and more complete statistical reporting.

The crisis reaches linguistics

For a long time, linguistics seemed insulated from these concerns. But the field has increasingly come under scrutiny. Sönning and Werner (2021) document how the broader replication crisis applies to linguistic research, identifying structural vulnerabilities including small samples, flexible analytical choices, and limited data sharing. The special issue they guest-edited in Linguistics (“Special Issue: The Replication Crisis: Implications for Linguistics” 2021) brought systematic attention to these issues.

In corpus linguistics specifically, Schweinberger (2026) and Schweinberger and Haugh (2025b) argue that the field faces reproducibility challenges that are both general (underpowered studies, analytical flexibility, publication bias) and discipline-specific (proprietary corpora, non-shared query scripts, undocumented annotation decisions). Flanagan (2025) provides an empirical assessment of reproducibility, replicability, robustness, and generalizability across corpus-linguistic studies, finding substantial room for improvement across all four dimensions. Schweinberger (2025) draws implications for how corpus linguists can redesign their workflows, reporting conventions, and publication practices to meet contemporary transparency standards.

Timeline of the crisis

Late 1990s – early 2000s: Failed replications begin to accumulate in medical research (Ioannidis 2005). Seminal psychology experiments prove difficult to reproduce. Questions about questionable research practices emerge.

2010s: The Reproducibility Project: Psychology (2015) provides the first large-scale systematic evidence (Collaboration 2015). The crisis spreads to economics and other social sciences (Anderson et al. 2016). A Nature survey across disciplines reveals widespread concern (Baker 2016). The open science movement begins to organise around concrete solutions.

2020s: Linguistics and corpus linguistics enter the conversation (Sönning and Werner 2021; Schweinberger 2026; Flanagan 2025). Funder and journal mandates for transparency become widespread. Tools and training for reproducible research become broadly available. The emphasis shifts from diagnosis to solutions.

Why research fails to reproduce

Four categories of failure account for most reproducibility problems (Goodman, Fanelli, and Ioannidis 2016; Munafò and Davey Smith 2018):

Methodological issues (approx. 40% of failures): Insufficient documentation of procedures, underpowered studies, inappropriate statistical methods, and decisions made during analysis that were not pre-specified and are not reported.

Data problems (approx. 35%): Data unavailable, data processing errors, undocumented handling of outliers or missing values, and raw data that has been lost or silently modified.

Computational issues (approx. 25%): Software versions not recorded, code not shared or not documented, random seeds not set, and computing environments not described — meaning that even with the same data and ostensibly the same method, results cannot be reproduced.

Publication bias: Positive results are preferentially submitted and accepted. Negative results are filed away. Researchers engage in p-hacking (running multiple analyses until a significant result appears) and HARKing (Hypothesizing After Results are Known), which inflates the apparent rate of significant findings in the published literature.

Benefits of reproducible research

For science: Reproducible findings are buildable findings — other researchers can extend, replicate, and meta-analyse them. Resources are not wasted reproducing basic infrastructure. Public trust in the enterprise is maintained.

For your career: Reproducible papers receive substantially more citations (Piwowar and Vision 2013). Data and code sharing increases visibility and collaboration opportunities. Funder and journal requirements are increasingly making reproducibility a condition of publication and grant success.

For you personally: Future you will be able to return to a project, understand what was done, and rerun or extend the analysis. Collaborators can contribute meaningfully. Errors are caught earlier. Work accumulates rather than dispersing.

Part 1: Core Concepts

What you will learn: The five key concepts that together constitute the framework for reproducible research — reproduction, replication, robustness, triangulation, and transparency — and how they relate to each other.

Why this matters: These terms are frequently used imprecisely and interchangeably in the literature. Precise definitions are the foundation for making principled decisions about research design and reporting.

Replication

Replication means repeating a study’s procedure with new data to test whether findings hold.

Formula: Same method + Different data → Similar results?

A replication uses a comparable (not necessarily identical) population and applies similar (not identical) procedures. It tests the robustness and generalizability of an original finding. A successful replication strengthens confidence that the finding reflects a genuine pattern rather than sample-specific noise. A failed replication does not automatically refute the original — it opens a productive scientific question about the boundary conditions of the effect.

Three main types of replication are commonly distinguished:

Direct (or close) replication keeps procedures as identical as possible and draws a different sample from the same population. This tests whether the original result was sample-specific. Conceptual replication tests the same underlying hypothesis using different procedures or measures, asking whether the result is method-specific. Constructive (or extended) replication adds new conditions or extends the original to new populations, testing boundary conditions and advancing theory.

In corpus linguistics, replication has a specific character: it typically means applying the same analytical approach to a different corpus or a different time period, testing whether a lexicogrammatical pattern, frequency finding, or collocation holds across data sources (Flanagan 2025; Schweinberger 2026).

Reproduction

Reproduction (also called computational reproducibility or computational replication) means repeating the analysis with the same data and the same method to verify that the reported results can be obtained.

Formula: Same method + Same data → Identical results

Reproduction is sometimes called repeatability (McEnery and Brezina 2022) or analytic reproducibility. It is a minimal baseline: if a study cannot be reproduced computationally, there is no way to verify that the reported results are correct, extend the analysis, or build on it.

Three levels can be distinguished (Schweinberger 2025):

Computational reproducibility is the strictest form: the same code applied to the same data on the same or equivalent computing environment produces bit-identical results. Practical reproducibility relaxes the environment requirement: another researcher can run the analysis on a different machine with modest effort, producing results that are substantively equivalent even if numerically trivial differences arise. Formal (or theoretical) reproducibility requires only that the documentation is in principle sufficient for reproduction, even if in practice the data or code are not shared.

Level 2 (practical reproducibility) is the minimum that should be aimed for in all quantitative research.

Robustness

Robustness means that results remain substantively stable when different analytical procedures are applied to the same or similar data.

Formula: Different methods + Same/similar data → Consistent conclusions?

A finding is robust if its direction and approximate magnitude are maintained across reasonable alternative analytical choices — different statistical models, different variable operationalisations, different ways of handling outliers or missing data. Robustness checks do not test whether the finding generalises to new data (that is replication’s job), but whether it is an artefact of specific analytical decisions.

Flanagan (2025) demonstrates that robustness — alongside reproducibility, replicability, and generalizability — is a distinct and separately assessable property of corpus-linguistic research. A study can be computationally reproducible yet fragile: reproduce it exactly and you get the same numbers, but change the corpus composition or the frequency threshold and the finding disappears.

Triangulation

Triangulation means using multiple approaches — different methods, data sources, or theoretical perspectives — to address a single research question.

Formula: Multiple approaches → Converging evidence?

Each individual method or dataset has its own limitations and assumptions. When multiple independent approaches converge on the same conclusion, confidence in that conclusion is substantially strengthened. When they diverge, that divergence is itself an important empirical finding that calls for explanation.

Four types of triangulation are commonly distinguished: data triangulation (multiple datasets, time periods, or populations), method triangulation (quantitative and qualitative, experimental and observational, multiple statistical approaches), investigator triangulation (multiple researchers independently analysing the same data), and theory triangulation (multiple theoretical frameworks applied to the same phenomenon) (Munafò and Davey Smith 2018).

Transparency

Transparency means clear, comprehensive reporting of all aspects of the research process, sufficient for others to understand, evaluate, and build upon the work.

Formula: Complete information → Others can understand and evaluate

Transparency is the enabling condition for all other aspects of reproducibility. A study cannot be reproduced if the methods are opaque; it cannot be replicated if the procedures are not described; its robustness cannot be assessed if analytical decisions are hidden.

Transparency operates at multiple levels (Schweinberger and Haugh 2025a):

Design transparency: Research questions stated upfront, hypotheses pre-registered where applicable, sampling strategy documented, power analysis reported. Data transparency: Collection methods detailed, processing steps documented, raw data shared where ethically permissible, deviations from the planned procedure noted. Analysis transparency: All analyses reported (not only significant ones), code shared, software versions specified, decision-making explained. Results transparency: Full results including null findings, confidence intervals and effect sizes reported, alternative explanations considered.

Transparency in corpus linguistics has some domain-specific dimensions (Schweinberger and Haugh 2025a; Schweinberger 2025). Corpus query scripts must be shared and annotated; decisions about corpus composition and sampling must be documented; annotation schemes must be described with sufficient detail for replication; and where a proprietary corpus is used, the subset of data drawn on should be described as precisely as copyright allows. Schweinberger and Haugh (2025a) show that even interpretive corpus pragmatics — a tradition not primarily associated with computational reproducibility — has much to gain from greater transparency about analytical decisions and interpretive processes.

Relationships between concepts

The five concepts are not independent — they form a hierarchy:

TRANSPARENCY

(Foundation)

↓

┌──────────────┼──────────────┐

↓ ↓ ↓

REPRODUCTION REPLICATION ROBUSTNESS

(Same data) (New data) (Alt. methods)

↓ ↓ ↓

└──────────────┼──────────────┘

↓

TRIANGULATION

(Multiple approaches)

↓

RELIABLE KNOWLEDGETransparency enables all other activities. Reproduction verifies computational accuracy. Replication tests generalizability across samples and contexts. Robustness checks confirm that results are not artefacts of specific analytical choices. Triangulation provides the strongest possible evidence by drawing multiple independent lines of inquiry to the same conclusion.

Q1. A researcher runs the same analysis script on the same dataset and obtains the same statistical results as the original paper. Which concept does this exemplify?

Q2. A corpus linguist replicates a study of hedging in academic writing, but uses a different corpus (a different institutional variety) and finds a similar pattern. The original finding is thus supported. This is an example of:

Part 2: The Reproducibility Spectrum

What you will learn: The five levels of reproducibility, from non-reproducible to fully open-science practice — and how to choose the appropriate level for your own work.

Why this matters: Not all research needs the same level of reproducibility. Understanding the spectrum allows you to make proportionate, realistic commitments rather than treating reproducibility as an all-or-nothing requirement.

Level 0: not reproducible

Characteristics: No data or code available; insufficient methodological detail to understand what was done; results cannot be independently verified.

When acceptable: Never, for published empirical research.

The cost: Claims cannot be evaluated, errors are undetectable, and science cannot advance cumulatively. As Munafò and Davey Smith (2018) note, non-reproducibility does not simply fail to advance knowledge — it actively misleads subsequent researchers who may invest resources attempting to build on findings that were never solid.

Level 1: reproducible publication

Characteristics: Detailed methods section; complete statistical reporting (test statistics, degrees of freedom, exact p-values, effect sizes, confidence intervals); supplementary materials; data availability statement.

What this enables: Understanding what was done; critical evaluation of the methods and analysis; conceptual replication.

Appropriate for: Theoretical papers, systematic reviews, qualitative research with appropriately detailed accounts of the analytical process.

Level 2: reproducible analysis

Characteristics: Data publicly available (or available on reasonable request, with a data access agreement); analysis code shared; codebook or data dictionary provided; basic documentation in a README file.

What this enables: Verification of reported results; alternative analyses on the same data; extension and development of the work.

Appropriate for: All quantitative research; computational analyses; published datasets.

Minimum requirements: A data sharing agreement or licence, commented analysis code, and a README file that explains how to reproduce the analysis.

Level 2 should be the minimum standard for all quantitative corpus-linguistic and computational research (Schweinberger 2025; Flanagan 2025).

Level 3: fully reproducible

Characteristics: Complete workflow documented from raw data to final output; version-controlled code with commit history; computational environment specified (R package versions, system dependencies); automation where possible.

What this enables: Push-button reproduction on a different machine; exact numerical replication; long-term reproducibility as software environments evolve.

Appropriate for: Computational research; complex analyses with many interdependent steps; high-stakes findings with policy implications.

Requirements: Environment management with renv or conda; dependency documentation; automated workflows; comprehensive README.

Level 4: reproducible science ecosystem

Characteristics: Pre-registration of hypotheses and analysis plan before data collection; registered reports (peer review before data are collected); open materials; open peer review; null results published.

What this enables: Prevention of p-hacking and HARKing; publication of null results; complete transparency about confirmatory vs. exploratory analyses; cumulative science.

Appropriate for: Experimental research; hypothesis-testing studies; contested areas; high-impact claims.

The registered report format — in which journals peer-review the study design and grant in-principle acceptance before data are collected — is increasingly recognised as one of the most powerful structural solutions to publication bias. A list of journals offering registered reports is maintained at cos.io/rr.

Choosing your level

Level 2 is the minimum for all quantitative research involving original data collection, corpus compilation, or computational analysis. This includes the vast majority of corpus-linguistic studies.

Levels 3–4 are strongly recommended when: findings are high-stakes or policy-relevant; the study involves a large computational pipeline; the research area is contested; you want maximum credibility and impact.

Practical constraints: Ethical obligations may prevent full data sharing (use deidentification, synthetic data, or a restricted-access repository). Copyright may restrict sharing of proprietary corpus material (share query scripts and frequency tables instead). Field norms and funder requirements also shape what is feasible.

A key principle: partial reproducibility is far better than none. Sharing your code without the data is valuable. Sharing a processed dataset without the raw data is valuable. Document what you cannot share and why.

Q3. A corpus study shares the analysis R script and the frequency tables extracted from a proprietary corpus, but cannot share the corpus itself due to licensing restrictions. Which level of the reproducibility spectrum does this best correspond to?

Part 3: Practical Strategies

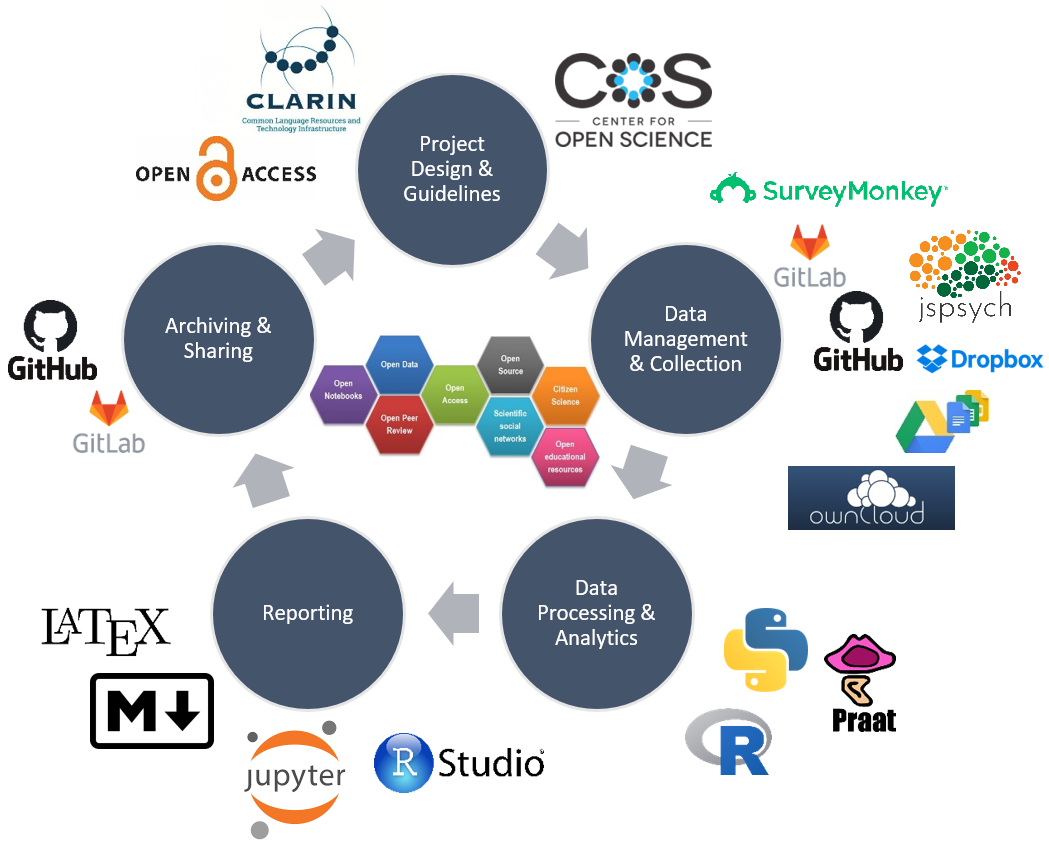

What you will learn: Seven practical strategies for making research reproducible — project organisation, documentation, version control, computational notebooks, environment management, data sharing, and pre-registration.

These are not abstract ideals but concrete, implementable practices. Each strategy can be adopted incrementally; you do not need to implement everything at once.

1. Project organisation

Standard folder structure

A consistent folder structure makes any project navigable by anyone (including future you) and enables automated workflows. The following template is widely used in research:

ProjectName/

├── README.md

├── data/

│ ├── raw/ ← Never edit these files!

│ ├── processed/

│ └── metadata/

├── code/

│ ├── 01_clean.R

│ ├── 02_analyze.R

│ └── 03_visualize.R

├── output/

│ ├── figures/

│ ├── tables/

│ └── reports/

├── docs/

│ ├── manuscript/

│ ├── presentations/

│ └── notes/

└── environment/

├── renv.lock

└── DockerfileRaw data files should never be edited after they are deposited in data/raw/. All transformations are applied in code, so the raw-to-processed pathway is always traceable and reproducible.

File naming conventions

Good file names are self-documenting and sort logically. A useful formula:

YYYY-MM-DD_project_description_version.extensionUse dates (ISO format), underscores rather than spaces, and meaningful descriptions. Avoid:

finalFINAL.R # Which final?

use_this_one.R # As opposed to which one?

analysis (1).R # Version control does this better2. Documentation

The bus factor

The bus factor of a project is the number of team members who would need to be suddenly unavailable for the project to fail. For most academic research, the bus factor is 1 — that person is you. Documentation is the technical solution to this problem: it ensures that the project can continue, be reproduced, or be extended even if you are no longer available to explain it in person.

README file

Every project repository should contain a README file at the root level. A minimal README includes a description of the research question and approach, the repository structure, instructions for reproducing the analysis, information about the data (source, size, access), software requirements, a citation, and a licence. The README is the first thing any new collaborator or reviewer reads; it should contain everything they need to get started.

Codebooks

A codebook documents every variable in a dataset: its name, type, description, units, permitted values, missing value codes, and any notes about how it was created or processed. Without a codebook, even a fully shared dataset is difficult to use, because variable names alone rarely communicate everything a downstream user needs to know.

Analysis logs

An analysis log is a dated record of every significant analytical decision: what data were cleaned and how, what outliers were identified and what was done with them, what models were run and what the results were, and why decisions were made when multiple options were available. Analysis logs are especially valuable when results change during revisions, when a collaborator picks up the work, or when a reviewer asks about a decision made months earlier.

3. Version control with Git

Git tracks every change to every file in a repository, recording who made the change, when, and (with a good commit message) why. This provides a complete, recoverable history of the project.

For researchers, the key benefits are that no work is ever permanently lost (any earlier state can be restored), experiments can be tried freely (a failed experiment can always be reverted), collaboration is managed systematically (changes from multiple contributors are merged with full attribution), and the evolution of the project is documented for reviewers, replicators, and future readers.

Core workflow:

# 1. Initialise repository

git init

# 2. Stage changes

git add analysis.R

# 3. Commit with a descriptive message

git commit -m "Add demographic variables to regression model"

# 4. Push to GitHub (if using remote)

git push origin mainGood commit messages are active-voice descriptions of what changed and why: “Fix calculation error in summary statistics”, “Remove outliers based on preregistered criteria”, “Update figure labels for manuscript submission”. Unhelpful messages (“stuff”, “changes”, “final version (really!)”) defeat the purpose.

RStudio provides a built-in Git interface — enabling staging, committing, and pushing through a graphical panel without requiring command-line familiarity. Set it up via Tools → Project Options → Git/SVN.

4. Computational notebooks

Computational notebooks (R Markdown, Quarto, Jupyter) integrate code, output, and narrative prose in a single document. For reproducible research they have three key advantages: the analysis is self-documenting (reasoning is written next to code), the output is embedded (figures, tables, and statistics are produced by the same document that describes them), and the document renders to multiple formats (HTML for sharing, PDF for archiving, Word for journals).

The following minimal example in Quarto illustrates the structure:

---

title: "Analysis of Survey Data"

author: "Your Name"

date: "2024-02-10"

format: html

---

# Introduction

This analysis examines the effect of mindfulness training on anxiety scores

(N = 150).

**Hypothesis**: Mindfulness training will reduce anxiety scores vs. control.

# Setup

::: {.cell}

```{.r .cell-code}

library(tidyverse)

library(lme4)

data <- read_csv("../data/processed/survey_clean.csv")

set.seed(42)

```

:::

# Descriptive Statistics

::: {.cell}

```{.r .cell-code}

data |>

group_by(condition) |>

summarise(n = n(), M = mean(anxiety_post), SD = sd(anxiety_post))

```

:::

# Main Analysis

::: {.cell}

```{.r .cell-code}

model <- lmer(anxiety_post ~ condition + anxiety_pre + (1|participant_id),

data = data)

summary(model)

```

:::

**Result**: Significant effect of condition, β = −13.2, t = 4.5, p < .001.5. Managing computational environments

Code that runs on your machine today may fail on a collaborator’s machine, or on your own machine two years from now, because software packages are updated and interfaces change. Documenting and managing the computational environment is therefore an essential part of reproducibility.

Using renv

The renv package manages project-specific R package libraries, recording the exact version of every package your project depends on in a lockfile (renv.lock). A collaborator who runs renv::restore() will install exactly the same package versions, regardless of what is currently installed on their system.

# One-time project setup

renv::init()

# After installing or updating packages, record the state

renv::snapshot()

# Collaborator restores exact environment

renv::restore()Recording session information

Always include sessionInfo() at the end of every analysis script or notebook. This records the R version, platform, and all loaded package versions, providing a permanent record even if the renv.lock file is lost.

Docker (advanced)

For ultimate long-term reproducibility — particularly for complex dependencies or high-stakes computational research — Docker containers capture the entire computing environment including the operating system, R installation, and all packages. The container runs identically on any machine that can run Docker, and its state is frozen at the time of creation.

6. Data sharing and DOIs

A Digital Object Identifier (DOI) is a persistent link — unlike a URL, it does not break when a website is reorganised. Sharing data and code under a DOI enables proper citation, tracks impact, satisfies funder and journal requirements, and ensures long-term accessibility.

Recommended repositories:

- Zenodo (zenodo.org) — free, open, supports large files (up to 50 GB per dataset), integrates with GitHub, and issues DOIs automatically.

- Open Science Framework (OSF) (osf.io) — free, integrates project management and pre-registration alongside data hosting.

- UQ Research Data Manager (research.uq.edu.au/rmbt/uqrdm) — free for UQ researchers, meets Australian funder requirements.

- Figshare (figshare.com) — free for public data, good visualisation tools.

Minimum to share: The final analysed dataset (deidentified if necessary), all analysis code, a README, and a codebook. Recommended additions: Raw data (if shareable), processing scripts, the rendered analysis notebook, and all study materials (survey instruments, annotation guidelines, etc.).

Data should be cited in reference lists just as articles are, using the standard format: Author(s). (Year). Title of dataset [Data set]. Repository. DOI.

7. Pre-registration

Pre-registration means publicly stating the research question, hypotheses, sample size justification, and analysis plan before data are collected, on a time-stamped public registry. This separates confirmatory from exploratory analysis, prevents post-hoc hypothesis construction (HARKing), and provides a check against p-hacking.

P-hacking involves running many analyses until a significant result is found, then reporting only that analysis. Without pre-registration, there is no record that other analyses were run.

HARKing (Hypothesizing After Results are Known) involves presenting hypotheses in a published paper as if they were formulated before data were collected, when in fact they were generated by examining the data. Pre-registration provides a time-stamped record of what was predicted.

Selective reporting means reporting only the analyses that produced significant or interpretable results. A pre-registered analysis plan creates an expectation of completeness.

Pre-registration platforms: - OSF Registries (osf.io/registries) — comprehensive templates, embargoes available - AsPredicted (aspredicted.org) — nine-question form, very quick

Registered reports go further: a journal peer-reviews the study design before data collection and grants in-principle acceptance, guaranteeing publication of the results regardless of whether the outcome is significant. This is the most powerful structural solution to publication bias. A growing list of journals offering registered reports (including linguistics journals) is maintained at cos.io/rr.

Q4. A researcher wants to ensure their R-based corpus analysis can be exactly reproduced by a colleague on a different computer. Which tool is most directly designed to solve this problem?

Part 4: Reproducibility in Corpus Linguistics

What you will learn: How the general challenges of reproducibility apply specifically to corpus linguistics — including corpus compilation, annotation, querying, and the balance between quantitative and qualitative interpretation.

Key references: Schweinberger (2026); Schweinberger and Haugh (2025b); Flanagan (2025); Schweinberger (2025); Schweinberger and Haugh (2025a); Sönning and Werner (2021)

The reproducibility landscape in corpus linguistics

Corpus linguistics occupies an interesting position in the reproducibility landscape. On one hand, it is an empirical, data-driven field that should in principle be well-placed to share data, scripts, and outputs. On the other hand, several structural features of the field have historically worked against reproducibility (Schweinberger 2026; Schweinberger and Haugh 2025b):

Proprietary corpora: Major corpora (BNC, ICE, COCA, and many others) are licensed rather than open. Researchers cannot share the underlying data, which limits verification and replication. The appropriate response is to share query scripts, frequency tables, concordance samples, and derived datasets that do not reproduce the original copyrighted text.

Undocumented analytical decisions: Corpus analysis typically involves many decisions — how words and constructions are operationalised, what search terms are used, how ambiguous or borderline cases are handled, what frequency thresholds are applied, which tokens are excluded and why. These decisions profoundly affect results but are often reported only partially or not at all.

Mixed-methods workflows: Many corpus-linguistic studies combine quantitative frequency analysis with qualitative interpretation of concordance lines. The quantitative component can in principle be reproduced; the qualitative component requires transparency about how categories were developed, how disagreements were resolved, and how representative examples were selected (Schweinberger and Haugh 2025a).

Annotation variability: Studies using annotated corpora (POS-tagged, parsed, semantically annotated) inherit the reliability and validity assumptions of the annotation system. These are rarely reported in detail, yet they can substantially affect which tokens are retrieved.

Robustness in corpus linguistics

Flanagan (2025) identifies robustness as a particularly important and underexplored dimension in corpus linguistics. Corpus findings are often sensitive to choices that are underreported: the choice of reference corpus in keyword analysis, the frequency threshold used to define a construction, the lemmatisation strategy, or the exclusion of particular registers. Running and reporting sensitivity analyses — varying one parameter at a time while holding others constant — is a practical way to demonstrate that a core finding is not an artefact of these choices.

Q5. A corpus-linguistic study uses a proprietary corpus and cannot share the underlying texts. Which combination of shared materials best satisfies reproducibility requirements?

Part 5: The Reproducibility Checklist

A practical checklist organised by research phase — before starting, during research, before publication, and at publication.

Before starting

Project setup - Create standard folder structure with raw/, processed/, code/, output/ subdirectories - Initialise Git repository (git init) and make an initial commit - Set up renv for package management (renv::init()) - Create README template with project description - Draft a data management plan

Pre-registration (where applicable) - Formulate specific, directional hypotheses - Determine required sample size (power analysis) - Specify primary and secondary analysis plans - Specify exclusion criteria and outlier handling procedures - Register on OSF Registries or AsPredicted before data collection begins

During research

Data collection - Document all procedures (including deviations from the plan) - Store raw data separately and never edit raw files - Maintain a dated data collection log

Data processing - Comment all code thoroughly — explain why, not just what - Use descriptive variable names - Document all decisions in an analysis log - Set random seeds (set.seed(42) or equivalent)

Analysis - Follow the pre-registered plan; if deviating, document clearly and label as exploratory - Run robustness checks with alternative specifications - Commit code regularly to Git with informative commit messages

Documentation - Update the README as the project evolves - Maintain the analysis log with dated entries - Create or update the codebook - Document software versions with sessionInfo()

Before publication

Code review - Confirm code runs from scratch on a clean environment - Replace all hard-coded paths with relative paths - Ensure all dependencies are documented in renv.lock - Add explanatory comments to any complex sections

Data preparation - Deidentify data if ethically required - Write a comprehensive codebook - Check for errors and inconsistencies in the final dataset - Document all data sources

Repository and DOI - Choose an appropriate repository (Zenodo, OSF, institutional RDM) - Upload all shareable materials: data, code, README, codebook, materials - Obtain a DOI before submission - Set an appropriate licence (CC-BY 4.0 is recommended for most research outputs) - Test that materials are accessible before citing the DOI in the manuscript

Computational environment - Run renv::snapshot() to update the lockfile - Record R version and key package versions - Include sessionInfo() output in the supplementary materials

At publication

Manuscript - Include a data/code availability statement with the repository DOI - Cite data and code in the reference list - Reference the pre-registration (if applicable) and report any deviations from it - Report all analyses, not only significant ones

Repository - Make the repository public on acceptance (if embargoed during review) - Respond promptly to data access requests - Update the repository if post-publication errors are identified

Part 6: Troubleshooting Common Challenges

Five common obstacles to reproducible research practice, and how to address them.

“My code is messy”

All researchers feel this way. The appropriate response is not to delay sharing until the code is “perfect” — that day rarely arrives. Working code that is shared and commented is far more valuable than polished code that stays on your hard drive. Start by adding comments that explain intent (not just mechanics), use consistent naming conventions, and test that the code runs from scratch in a clean environment. Sharing imperfect code helps the field and tends to attract collaborative improvement.

“I do not have time for this”

The time investment for setting up reproducible practices is real but front-loaded: roughly four to six hours for initial setup. Ongoing maintenance runs to about thirty minutes per week. This is typically offset many times over by time saved finding files, recovering lost analyses, responding to reviewer queries about methods, and handing projects to collaborators or research assistants. Starting small — folder structure and README in week one, Git in week two, documentation in week three — makes the transition manageable.

“My collaborators do not care”

You can only directly control your own practices. A practical strategy is to make reproducible practices convenient for collaborators rather than demanding: set up the folder structure and Git repository yourself, create README templates they can fill in, and demonstrate efficiency gains over time. Emphasizing that funder and journal requirements are moving in this direction tends to be persuasive.

“My field does not do this”

Linguistics has historically lagged behind the natural and social sciences in open science practice, but this is changing rapidly. The special issues edited by Sönning and Werner (2021), Schweinberger (2026), and Schweinberger and Haugh (2025b) document both the gap and the ongoing transformation. Early adopters in a field receive disproportionate recognition and have greater influence over the norms that eventually emerge. The practices described in this tutorial are the direction the field is moving, not a departure from it.

Resources

Key tools, learning resources, and communities for reproducible research practice.

Tools

Version control: Git, GitHub, GitLab

Notebooks: Quarto (recommended), R Markdown, Jupyter

Environment management: renv (R), conda (Python), Docker

Data repositories: Zenodo, OSF, Figshare, UQ RDM

Pre-registration: OSF Registries, AsPredicted, Registered Reports

Learning resources

- The Turing Way — a comprehensive community-driven handbook for reproducible research

- British Ecological Society Guide — practical guide with workflow templates

- LADAL Reproducibility with R — a hands-on practical companion tutorial

- Software Carpentry and Data Carpentry — workshops and lesson materials

Key papers: - Baker (2016) — Nature survey of researcher experiences - Munafò and Davey Smith (2018) — manifesto for reproducible science with a practical reform agenda - Nosek and Errington (2020) — review of replication and reproducibility across sciences - Goodman, Fanelli, and Ioannidis (2016) — clarifying the concepts of reproducibility - Wilson et al. (2017) — good enough practices in scientific computing - Sönning and Werner (2021) — the replication crisis and its implications for linguistics - Schweinberger (2026) — reproducibility, replication, and robustness in corpus linguistics (special issue) - Flanagan (2025) — empirical assessment of the four reproducibility dimensions in corpus linguistics - Schweinberger (2025) — practical implications for corpus-linguistic research design and reporting

Communities

- Center for Open Science — tools, training, and advocacy for open research

- ReproducibiliTea — international network of journal clubs discussing reproducibility

- rOpenSci — R packages and community for reproducible research

Quick Reference

Reproducibility workflow summary

Every new project:

1. Create standard folder structure

2. Initialise Git (git init) and make initial commit

3. Create README

4. Set up renv (renv::init())

5. Consider pre-registrationEvery analysis session:

1. Pull latest (git pull)

2. Work on code and analysis

3. Commit frequently with descriptive messages

4. Update documentation (README, analysis log)

5. Push to remote (git push)Before publication:

1. Confirm code runs from scratch in a clean environment

2. Document environment (renv::snapshot(); sessionInfo())

3. Write or update codebook

4. Obtain DOI for data/code repository

5. Upload all shareable materials; set licenceRed flags for non-reproducibility

Watch out for: no version control; data in emails or unnamed desktop folders; hard-coded file paths; no documentation; manual data processing steps with no code; multiple files named “final”; software or package versions not recorded; analysis decisions that are not reported.

Green flags for reproducibility

Look for: a Git repository with meaningful commit history; a standard folder structure; a README explaining how to reproduce the analysis; commented code in a notebook; an renv.lock file; a codebook; a public repository with a DOI; a data/code availability statement in the manuscript; pre-registration for confirmatory hypotheses.

Citation & Session Info

Martin Schweinberger. 2026. Reproducible Research: Principles and Practice. The Language Technology and Data Analysis Laboratory (LADAL), The University of Queensland, Australia. url: https://ladal.edu.au/tutorials/repro/repro.html (Version 2026.03.28), doi: .

@manual{martinschweinberger2026reproducible,

author = {Martin Schweinberger},

title = {Reproducible Research: Principles and Practice},

year = {2026},

note = {https://ladal.edu.au/tutorials/repro/repro.html},

organization = {The Language Technology and Data Analysis Laboratory (LADAL), The University of Queensland, Australia},

edition = {2026.03.28}

doi = {}

}Code

sessionInfo()R version 4.4.2 (2024-10-31 ucrt)

Platform: x86_64-w64-mingw32/x64

Running under: Windows 11 x64 (build 26200)

Matrix products: default

locale:

[1] LC_COLLATE=English_United States.utf8

[2] LC_CTYPE=English_United States.utf8

[3] LC_MONETARY=English_United States.utf8

[4] LC_NUMERIC=C

[5] LC_TIME=English_United States.utf8

time zone: Australia/Brisbane

tzcode source: internal

attached base packages:

[1] stats graphics grDevices datasets utils methods base

other attached packages:

[1] checkdown_0.0.13 gapminder_1.0.0 lubridate_1.9.4 forcats_1.0.0

[5] stringr_1.6.0 dplyr_1.2.0 purrr_1.2.1 readr_2.1.5

[9] tibble_3.3.1 ggplot2_4.0.2 tidyverse_2.0.0 tidyr_1.3.2

[13] here_1.0.2 DT_0.33 kableExtra_1.4.0 knitr_1.51

loaded via a namespace (and not attached):

[1] generics_0.1.4 renv_1.1.7 xml2_1.3.6

[4] stringi_1.8.7 hms_1.1.4 digest_0.6.39

[7] magrittr_2.0.4 evaluate_1.0.5 grid_4.4.2

[10] timechange_0.3.0 RColorBrewer_1.1-3 fastmap_1.2.0

[13] rprojroot_2.1.1 jsonlite_2.0.0 BiocManager_1.30.27

[16] viridisLite_0.4.2 scales_1.4.0 codetools_0.2-20

[19] cli_3.6.5 rlang_1.1.7 litedown_0.9

[22] commonmark_2.0.0 withr_3.0.2 yaml_2.3.10

[25] tools_4.4.2 tzdb_0.5.0 vctrs_0.7.2

[28] R6_2.6.1 lifecycle_1.0.5 htmlwidgets_1.6.4

[31] pkgconfig_2.0.3 pillar_1.11.1 gtable_0.3.6

[34] glue_1.8.0 systemfonts_1.3.1 xfun_0.56

[37] tidyselect_1.2.1 rstudioapi_0.17.1 farver_2.1.2

[40] htmltools_0.5.9 rmarkdown_2.30 svglite_2.1.3

[43] compiler_4.4.2 S7_0.2.1 markdown_2.0 This tutorial was revised and restyled with the assistance of Claude (claude.ai), a large language model created by Anthropic. All new content — the corpus linguistics section, the integrated references, the exercises, and the revised prose — was reviewed and approved by Martin Schweinberger, who takes full responsibility for the tutorial’s accuracy and completeness.